ZooKeeper Sessions

ZooKeeper客户端初始化后转换到CONNECTING状态,与ZooKeeper服务器(或者ZooKeeper集群中的一台服务器)建立连接后,进入CONNECTED状态

当客户端与ZooKeeper服务器断开连接或者无法收到服务器响应时,就会转回到CONNECTING状态,此时会一直收到CONNECTION_LOSS。

这时ZooKeeper客户端会自动地从列表中逐个选取新的地址进行重连,如果成功,状态又会转回CONNECTED状态

如果一直无法重连,超过会话超时时间(sessionTimeout)后,服务器认为这个session已经结束了,此时客户端无法感知

最后当客户端终于自动重连到ZooKeeper服务器时,会收到Session Expired;这种情况下,需要应用层关闭当前会话,然后重连。

在CONNECTING状态和CONNECTED状态,客户端都可以显示地关闭,进入CLOSED状态

当建立session时,客户会收到由服务器创建的session id和password。当自动重连时,客户端都会发送session id和password给服务端,重建的还是原来的session。以上的自动重连都是由底层的API库来实现,非应用层面去实现的重连

客户端自动重连zk的测试 cons 查看连接到服务端的信息,看到在自动重连之后,session id保持不变。

Session Expired后的重连实践 Session Expired产生的过程 再来回顾下产生session expired的过程,官网上的说明很清楚。

Session expiration is managed by the ZooKeeper cluster itself, not by the client. When the ZK client establishes a session with the cluster it provides a “timeout” value detailed above. This value is used by the cluster to determine when the client’s session expires. Expirations happens when the cluster does not hear from the client within the specified session timeout period (i.e. no heartbeat). At session expiration the cluster will delete any/all ephemeral nodes owned by that session and immediately notify any/all connected clients of the change (anyone watching those znodes).* At this point the client of the expired session is still disconnected from the cluster, it will not be notified of the session expiration until/unless it is able to re-establish a connection to the cluster.* The client will stay in disconnected state until the TCP connection is re-established with the cluster, at which point the watcher of the expired session will receive the “session expired” notification.

Example state transitions for an expired session as seen by the expired session’s watcher:

‘connected’ : session is established and client is communicating with cluster (client/server communication is operating properly)

…. client is partitioned from the cluster

‘disconnected’ : client has lost connectivity with the cluster

…. time elapses, after ‘timeout’ period the cluster expires the session, nothing is seen by client as it is disconnected from cluster

…. time elapses, the client regains network level connectivity with the cluster

‘expired’ : eventually the client reconnects to the cluster, it is then notified of the expiration

所以需要在客户端收到expiration后,进行重连。

Session Expired后的重连的测试 由于生产环境中用的语言是node,选用的客户端是node-zookeeper 。这是一个封装了ZooKeeper C API的模块.

后面实验的测试环境如下:

zk集群:192.168.154.103:2181,192.168.154.104:2181,192.168.154.105:2181

客户端:192.168.154.106 uhost-access

重连的过程如下:

(1)客户端拉起uhost-access服务:

1 2 3 4 5 [root@192-168-153-51 node-common] /192.168.154.106 :48146 [1](queued =0,recved =99,sent =102,sid =0x6b364768a90001,lop =NA,est =1560000679478,to =40000,lcxid =0x5cfbb93f,lzxid =0x1300013dd4,lresp =1560000745134,llat =1,minlat =0,avglat =1,maxlat =6) /192.168.154.106 :48149 [1](queued =0,recved =7,sent =7,sid =0x6b364768a90000,lop =PING,est =1560000679478,to =40000,lcxid =0x5cfbb8b6,lzxid =0xffffffffffffffff,lresp =1560000732987,llat =0,minlat =0,avglat =0,maxlat =4) [root@192-168-153-51 node-common] [root@192-168-153-51 node-common]

(2)客户端添加防火墙规则,模拟网络分区

1 2 3 4 5 6 iptables -A OUTPUT -d 192.168 .154.103 -p tcp --dport 2181 -j DROP iptables -A OUTPUT -d 192.168 .154.104 -p tcp --dport 2181 -j DROP iptables -A OUTPUT -d 192.168 .154.105 -p tcp --dport 2181 -j DROP iptables -A INPUT -s 192.168 .154.103 -p tcp --sport 2181 -j DROP iptables -A INPUT -s 192.168 .154.104 -p tcp --sport 2181 -j DROP iptables -A INPUT -s 192.168 .154.105 -p tcp --sport 2181 -j DROP

此时客户端和zk自动重连失败,一直处于CONNECTING状态

这部分错误在module zookeeper: node-zk.cpp里

1 2 2019 -06 -08 21 :38 :52 ,739 :22724 :ZOO_ERROR@handle_socket_error_msg@1666 : Socket [192.168.154.103:2181] zk retcode=-7, errno=110(Connection timed out): connection to 192.168.154.103:2181 timed out (exceeded timeout by 1ms) 2019-06-08 21:38:52,742:22724:ZOO_ERROR@yield@234: yield:zookeeper_interest returned error: -7 - operation timeout

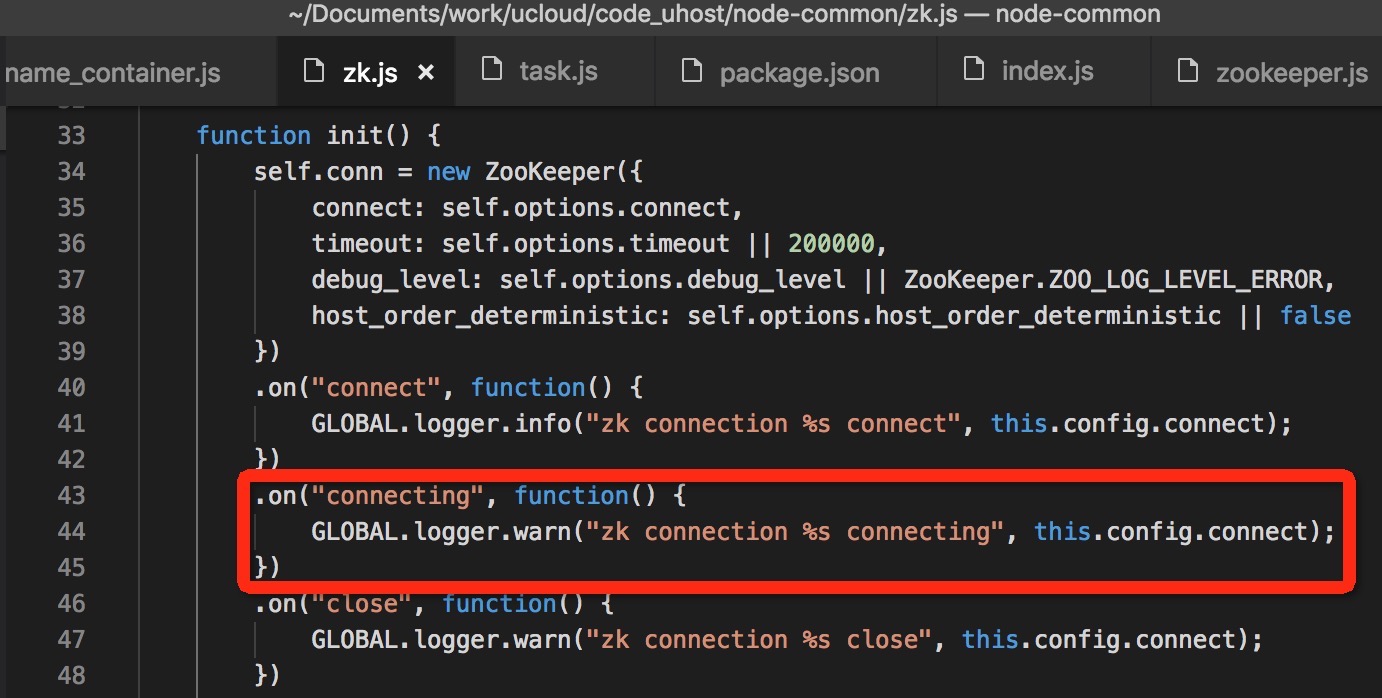

zk.js(在node-zookeeper上层封装的代码)打印on connecting的日志

1 2 3 [2019-06-08 21 :38:52.739 ] [WARN] uhost-access - zk connection 192.168.154.103 :2181,192.168 .154.104:2181 ,192.168.154.105 :2181 connecting [2019-06-08 21 :38:53.016 ] [WARN] uhost-access - zk connection 192.168.154.103 :2181,192.168 .154.104:2181 ,192.168.154.105 :2181 connecting

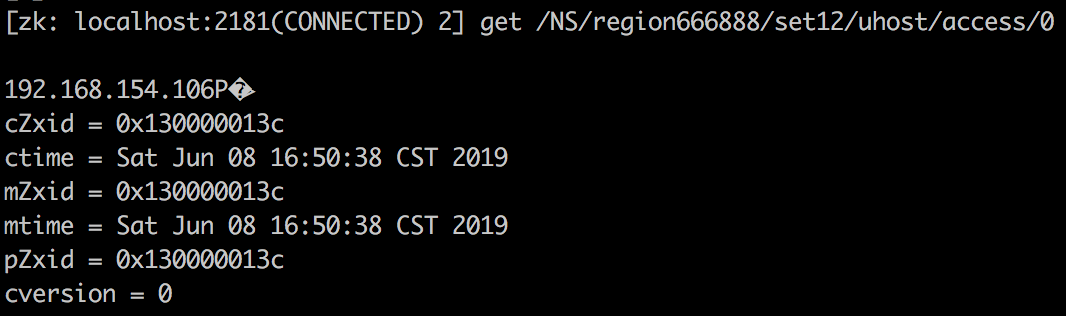

/NS/region666888/set12/uhost/access/0临时节点和会话被清理

(3)删除防火墙规则,模拟网络恢复

清楚防火墙规则:iptables -F

(4)重连

此时,客户端自动重连到zk时,会收到session expiration的错误。

1 2 3 4 5 6 7 8 9 2019 -06-08 22 : 14 : 20 ,118 : 22724 :ZOO_ERROR @handle_socket_error_msg @1764 : Socket [192.168 .154.104 : 2181 ] zk retcode=-112 , errno=116 (Stale file handle): sessionId=0x6b364768a90000 has expired.2019 -06-08 22 : 14 : 20 ,118 : 22724 :ZOO_ERROR @main_watcher @380 : Session expired. Shutting down...2019 -06-08 22 : 14 : 20 ,124 : 22724 :ZOO_ERROR @zk_io_cb @278 : yield: zookeeper_process returned error: -112 - session expired2019 -06-08 22 : 14 : 39 ,701 : 22724 :ZOO_ERROR @handle_socket_error_msg @1764 : Socket [192.168 .154.103 : 2181 ] zk retcode=-112 , errno=116 (Stale file handle): sessionId=0x6b364768a90001 has expired.2019 -06-08 22 : 14 : 39 ,702 : 22724 :ZOO_ERROR @main_watcher @380 : Session expired. Shutting down...2019 -06-08 22 : 14 : 39 ,707 : 22724 :ZOO_ERROR @zk_io_cb @278 : yield: zookeeper_process returned error: -112 - session expired

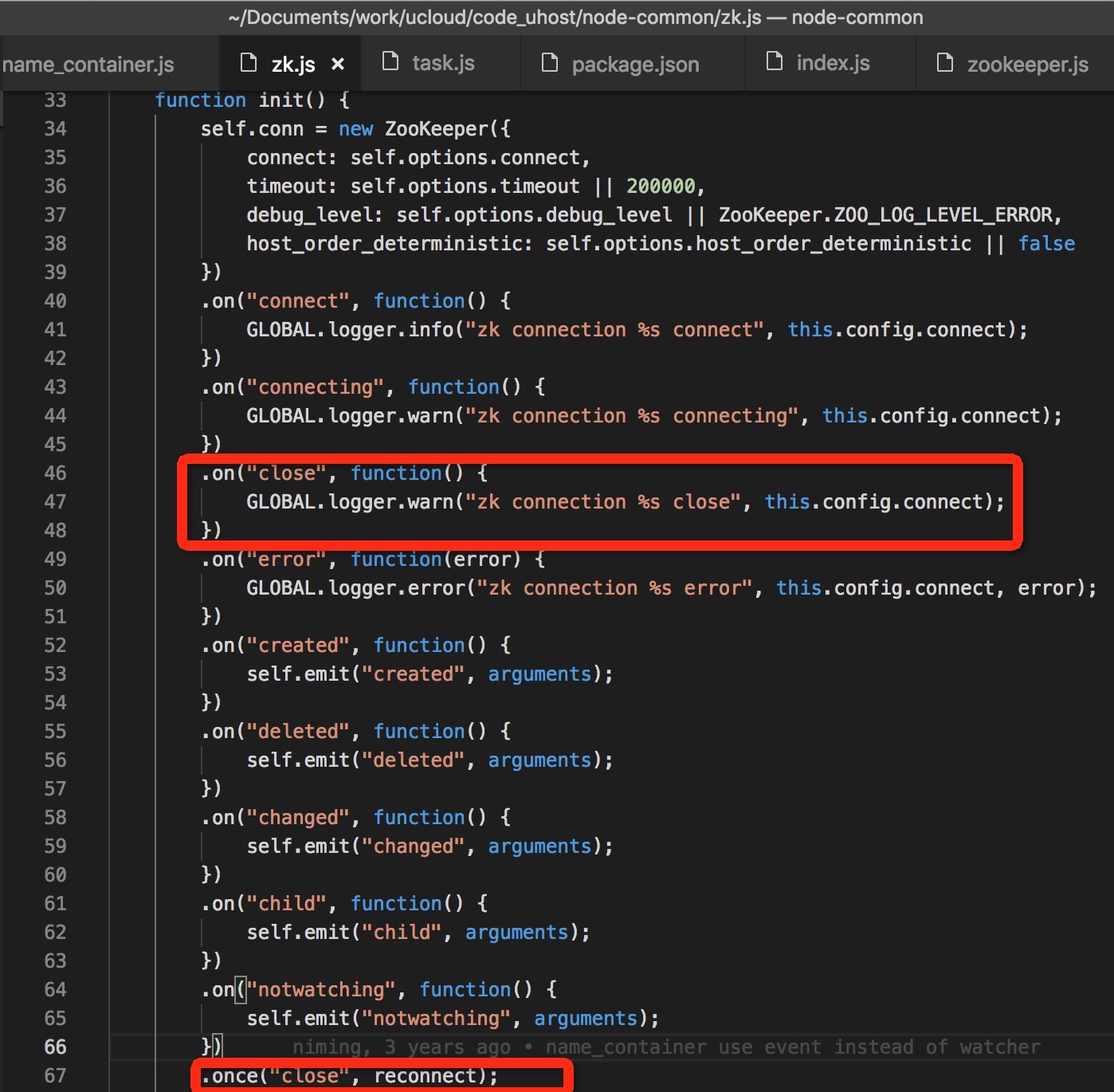

在node-zookeeper里,会有检测ZOO_ EXPIRED_ SESSION_STATE的watcher。当watch到后,会emit一个closed的event。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 static void main_watcher (zhandle_t *zzh, int type, int state , const char *path, void* context) { Nan::HandleScope scope; LOG_DEBUG(("main watcher event: type=%d, state=%d, path=%s" , type, state , (path ? path: "null" ))); ZooKeeper *zk = static_cast<ZooKeeper *> (context); if (type == ZOO_SESSION_EVENT) { if (state == ZOO_CONNECTED_STATE) { zk->myid = *(zoo_client_id(zzh)); zk->DoEmitPath(Nan::New(on_connected), path); } else if (state == ZOO_CONNECTING_STATE) { zk->DoEmitPath (Nan::New(on_connecting), path); } else if (state == ZOO_AUTH_FAILED_STATE) { LOG_ERROR(("Authentication failure. Shutting down...\n" )); zk->realClose(ZOO_AUTH_FAILED_STATE); } else if (state == ZOO_EXPIRED_SESSION_STATE) { LOG_ERROR(("Session expired. Shutting down...\n" )); zk->realClose(ZOO_EXPIRED_SESSION_STATE); } } ...... } void realClose (int code) { ...... if (zhandle) { ...... // Close the timer and finally Unref the ZooKeeper instance when it's done // Unrefing after is important to avoid memory being freed too early. uv_close((uv_handle_t*) &zk_timer, timer_closed); Nan::HandleScope scope; DoEmitClose (Nan::New(on_closed), code); } }

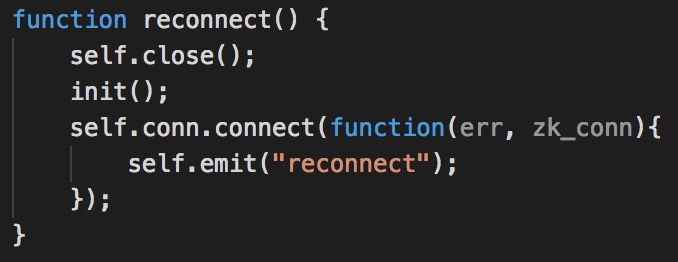

在自己封装的上层业务库里,捕获到这个close event,并重连到zk

重连之后,session id改变,建立了新的session

1 2 3 4 5 [root@192-168-153-51 node-common] [root@192-168-153-51 node-common] [root@192-168-153-51 node-common] /192.168.154.106 :40100 [1](queued =0,recved =56,sent =56,sid =0x26b3647662b0002,lop =PING,est =1560003260125,to =40000,lcxid =0x5cfbc659,lzxid =0x1300016fae,lresp =1560003948592,llat =0,minlat =0,avglat =0,maxlat =1) /192.168.154.106 :40106 [1](queued =0,recved =837,sent =837,sid =0x26b3647662b0003,lop =NA,est =1560003279705,to =40000,lcxid =0x5cfbc841,lzxid =0x1300016fde,lresp =1560003957541,llat =1,minlat =0,avglat =1,maxlat =17)

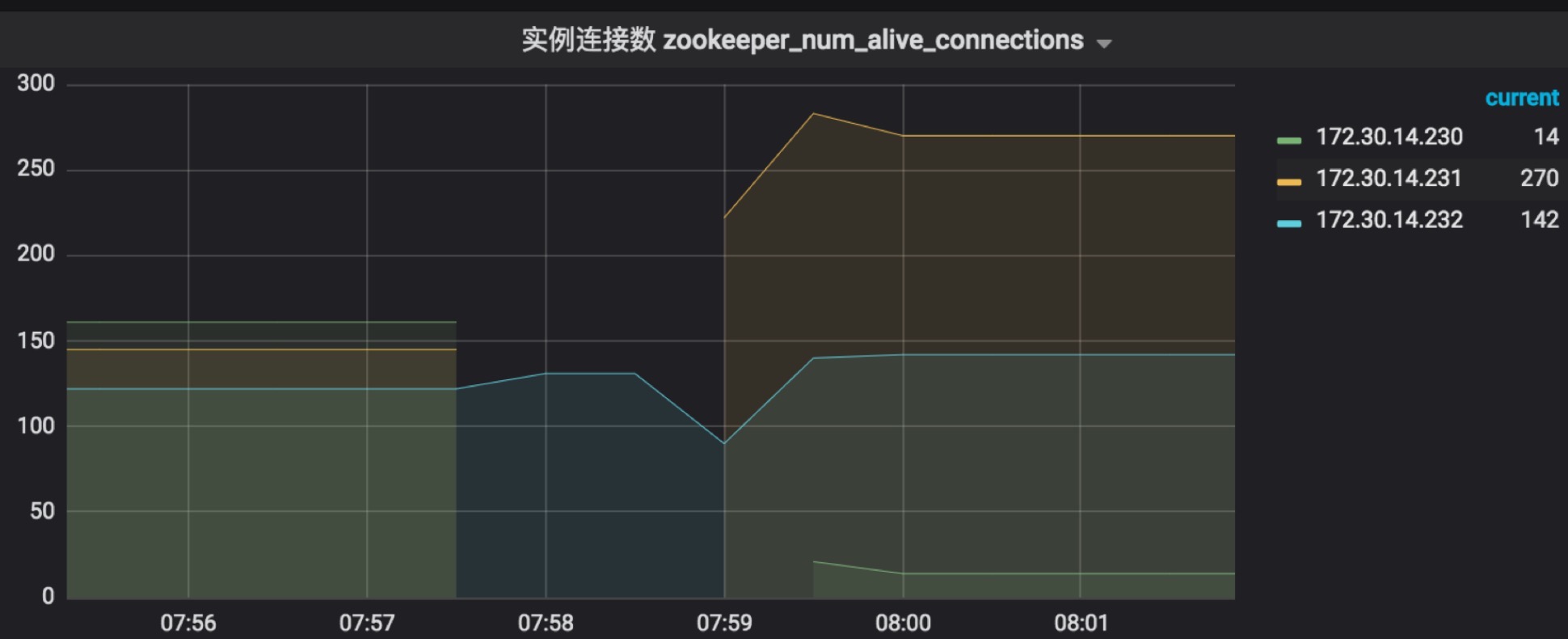

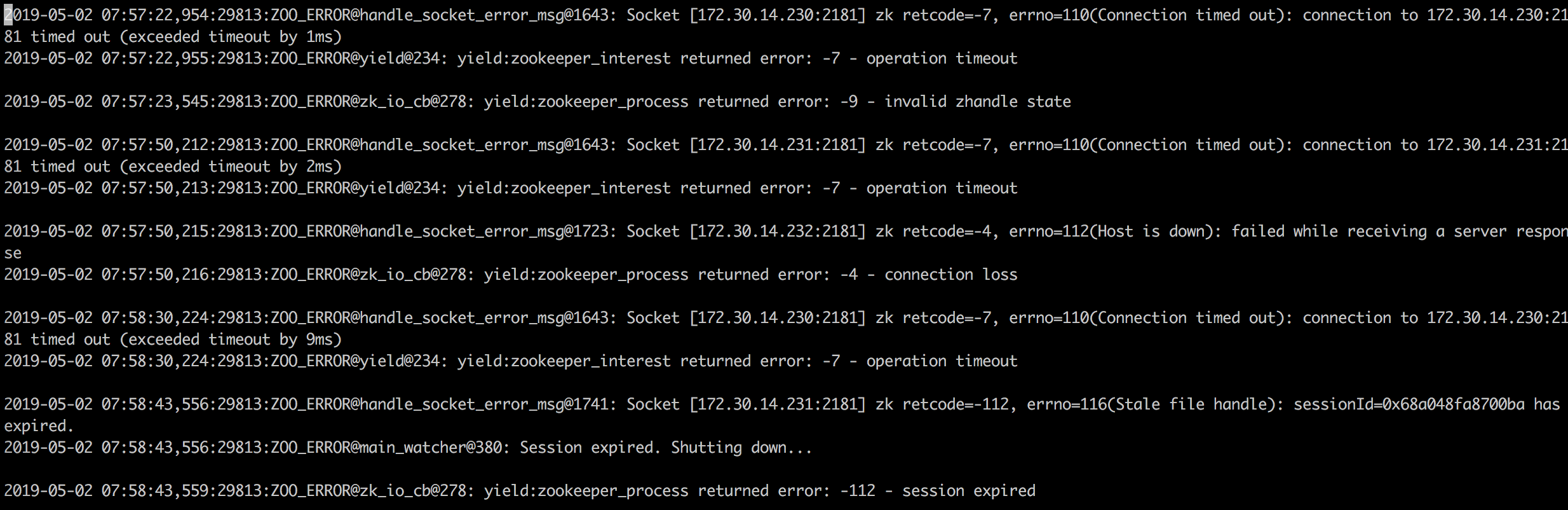

新加坡机房重连失败的分析 线上问题:5月2日新加坡机房set11出现的问题:zk异常,有2分钟处于不工作状态,主机服务(客户端)收到session expired错误。

当时并未重连成功,但测试下来确实是有重连的逻辑的。虽然当时zk的具体问题原因无法查清,也无法复现问题。但可以猜测是重连的时候,zk服务没有恢复,导致重连没有成功。

在zk删除数据后的重连实践 问题描述 关闭zk, 删除节点数据,拉起zk → 无法自动注册成功,需要重启服务

测试步骤

zk集群:service zookeeper stop

zk集群里删除快照和日志数据:rm -f /data/zookeeper/data/version-2/* rm -f /data/zookeeper/log/version-2/*

zk集群拉起:service zookeeper start

自动重连还会连到原来的session,但因为数据已经删除了,无法以原来session连到zk,一直失败。

客户端:192.168.154.106 uhost-access的错误日志

1 2 3 4 5 2019 -06-09 22 : 59 : 33 ,339 : 27610 :ZOO_ERROR @handle_socket_error_msg @1746 : Socket [192.168 .154.105 : 2181 ] zk retcode=-4 , errno=112 (Host is down): failed while receiving a server response2019 -06-09 22 : 59 : 33 ,339 : 27610 :ZOO_ERROR @zk_io_cb @278 : yield: zookeeper_process returned error: -4 - connection loss2019 -06-09 22 : 59 : 33 ,340 : 27610 :ZOO_ERROR @handle_socket_error_msg @1746 : Socket [192.168 .154.105 : 2181 ] zk retcode=-4 , errno=112 (Host is down): failed while receiving a server response2019 -06-09 22 : 59 : 33 ,341 : 27610 :ZOO_ERROR @zk_io_cb @278 : yield: zookeeper_process returned error: -4 - connection loss

结果: node服务的节点数据都没有自动注册上去。相当于复现了线上香港公共zk升级后数据丢失后,业务代码没有再次注册的话,需要服务重启的问题。

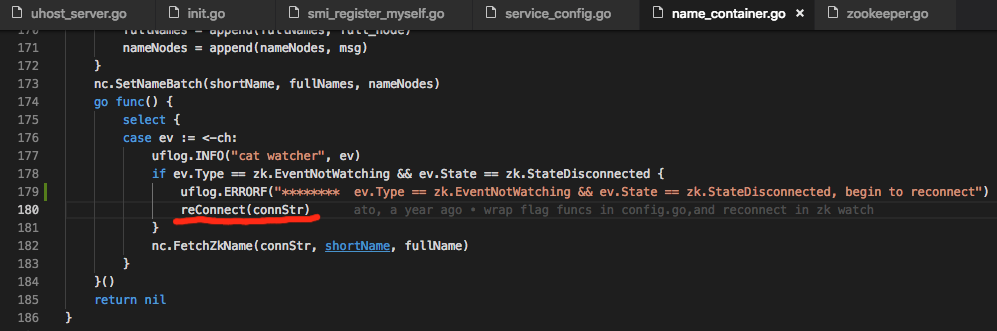

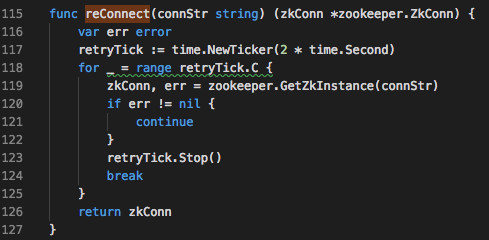

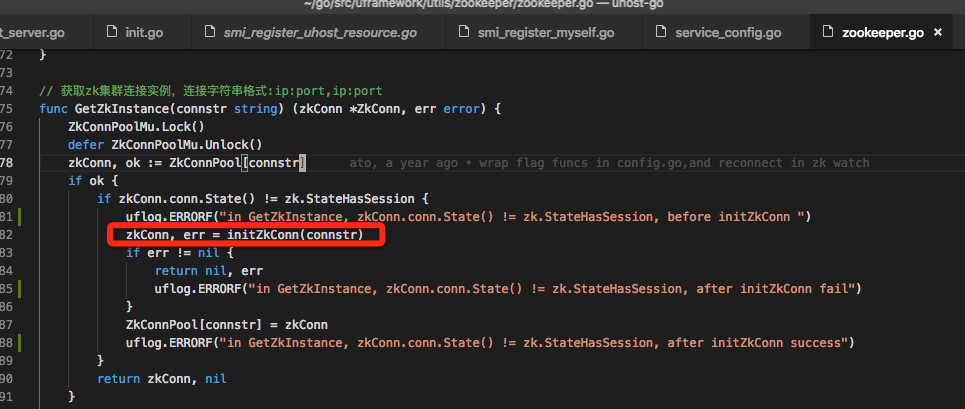

go框架uframework里的处理 go框架uframework里用了golang版本的zk 客户端(go-zookeeper ),并又封装了name_container.go的文件,里面实现了上层重连的逻辑。在zookeeper集群数据清掉之后再被拉起,服务的节点还可以重新注册上去。

它的实现有两处重连的逻辑:

(1)name_container.go → func FetchZkName里,注册了所有关注节点的子节点变化的watcher。

1 childs, _, ch, err := zkConn.GetChildrenWatcher(fullName)

当连接断开时,触发一个event,然后重连。又调用FetchZkName, 保证一直有个 goroutine在等待这个事件的触发。

重复4.1中的测试后,可以重连(相当于新建session)

在我们的服务里,关注的节点有三个uhost-access、uimage3-access、uimage3-manager,所有会有三个reconnect的动作,但只会有一个成功。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 [2019-06-09 23 :57 :21.471566 ] [INFO]cat watcher {EventNotWatching StateDisconnected /NS /region666888/set12/uhost/access zk: zookeeper is closing } [2019-06-09 23 :57 :21.471724 ] [ERROR]******** ev.Type == zk.EventNotWatching && ev.State == zk.StateDisconnected, begin to reconnect [2019-06-09 23 :57 :21.471742 ] [INFO]cat watcher {EventNotWatching StateDisconnected /NS /region666888/set12/uimage3/access zk: zookeeper is closing } [2019-06-09 23 :57 :21.471781 ] [INFO]cat watcher {EventNotWatching StateDisconnected /NS /region666888/set12/uimage3/manager zk: zookeeper is closing } [2019-06-09 23 :57 :21.471786 ] [ERROR]******** ev.Type == zk.EventNotWatching && ev.State == zk.StateDisconnected, begin to reconnect [2019-06-09 23 :57 :21.471867 ] [ERROR]******** ev.Type == zk.EventNotWatching && ev.State == zk.StateDisconnected, begin to reconnect [2019-06-09 23 :57 :23.472008 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, before initZkConn [2019-06-09 23 :57:24.481209 ] [ERROR]Connect zk Servers [192.168.154.103 :2181 192.168 .154.104:2181 192.168.154.105 :2181 ] failed:can not connect remote servers [2019-06-09 23 :57 :24.481379 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, after initZkConn fail [2019-06-09 23 :57 :24.481427 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, before initZkConn [2019-06-09 23 :57 :25.483472 ] [ERROR]Connect zk Servers [192.168.154.103 :2181 192.168 .154.104:2181 192.168.154.105 :2181 ] failed:can not connect remote servers [2019-06-09 23 :57 :25.483658 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, after initZkConn fail [2019-06-09 23 :57 :25.483674 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, before initZkConn [2019-06-09 23 :57 :26.492263 ] [ERROR]Connect zk Servers [192.168.154.103 :2181 192.168 .154.104:2181 192.168.154.105 :2181 ] failed:can not connect remote servers [2019-06-09 23 :57 :26.492371 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, after initZkConn fail [2019-06-09 23 :57 :26.492390 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, before initZkConn [2019-06-09 23 :57 :26.515713 ] [ERROR]in GetZkInstance, zkConn.conn.State() != zk.StateHasSession, after initZkConn success

(2)周期性注册自身节点到zk时触发。

注:这是在把(1)里面所有的reconnect注释的情况下测试的,不然会混淆。

第42秒时,zk还没恢复,所以连接不上;第47秒,连到zk后,注册自身节点成功。

1 2 3 4 5 6 7 019 -06 -10 00 :19 :42.225063 ] [ERROR] in GetZkInstance, zkConn.conn .State () != zk.StateHasSession , before initZkConn[2019-06-10 00:19:43.232714] [ERROR] Connect zk Servers [192.168.154.103:2181 192.168.154.104:2181 192.168.154.105:2181] failed:can not connect remote servers[2019-06-10 00:19:43.232775] [ERROR] in GetZkInstance, zkConn.conn .State () != zk.StateHasSession , after initZkConn fail[2019-06-10 00:19:43.232877] [ERROR] [register_myself] connect zk server error[2019-06-10 00:19:47.224898] [ERROR] in GetZkInstance, zkConn.conn .State () != zk.StateHasSession , before initZkConn[2019-06-10 00:19:47.254394] [ERROR] in GetZkInstance, zkConn.conn .State () != zk.StateHasSession , after initZkConn success[2019-06-10 00:19:47.302364] [INFO] [register_myself] complte register, /NS/region666888/set12/uhost/scheduler/0

题外话:

When a client (session) becomes partitioned from the ZK serving cluster it will begin searching the list of servers that were specified during session creation. Eventually, when connectivity between the client and at least one of the servers is re-established, the session will either again transition to the “connected” state (if reconnected within the session timeout value) or it will transition to the “expired” state (if reconnected after the session timeout). It is not advisable to create a new session object (a new ZooKeeper.class or zookeeper handle in the c binding) for disconnection. The ZK client library will handle reconnect for you. In particular we have heuristics built into the client library to handle things like “herd effect”, etc… Only create a new session when you are notified of session expiration (mandatory).

node-common下zk.js的改进 目前的逻辑, 只有在收到close的event,有一次重试。缺少定期的检查和重试机制。

模仿4.2中的重连逻辑,在node中也能很快地实现。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 ZkClass.prototype.connect = function ( var self = this; var f = new Future; function reconnect ( self .close(); init(); self .conn.connect(function (err, zk_conn ) self .emit("reconnect" ); }); } function init ( self .conn = new ZooKeeper({ connect: self .options.connect, timeout: self .options.timeout || 200000 , debug_level: self .options.debug_level || ZooKeeper.ZOO_LOG_LEVEL_ERROR, host_order_deterministic: self .options.host_order_deterministic || false }) ...... .once("close" , reconnect); ...... } init(); self .conn.connect(function (err, zk_conn ) if (err) { f.throw (err); } f.return (zk_conn); }); var res = f.wait(); FunctionPool.register("zk_" + uuid.v4() + "_worker" , function ( if (self .conn.state != ZkClass.ZOO_CONNECTED_STATE) { GLOBAL .logger.info( "self.conn.state: %d, not ZOO_CONNECTED_STATE(3), begin to reconnet" , self .conn.state ); reconnect(); } }, 10000 ); return res; }

参考链接